Chai3D

In a previous post we discussed H3DAPI as a useful API for creating visuo-haptic applications. Another great API is Chai3D. I use it for most small applications I develop these days. It is a smaller framework and rely on purely imperative C++. Together with the single-file examples it makes it straight-forward to transit from getting-started to making advanced haptic effects, given that you are comfortable programming C++. Chai3D is developed by people from several universities and companies and used for example in courses at University of Calgary and KTH.

Chai3D is modular and device agnostic, so one way to use it for only abstracting the haptic device, i.e. you can easily develop an application that works with haptic devices of different brands. The latest release of Chai3d is 3.2, and I have a branch on github for haptikfabriken support. The branch also includes windows binaries of haptikfabriken api so you could get started very quickly if you like. For Linux it is also straight-forward, just clone, build and make install haptikfabrikenapi first, then continue with Chai3d.

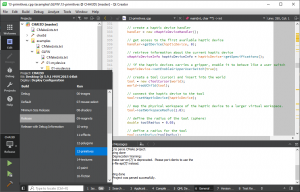

The build system of Chai3D is cmake (there are other pre-made projects but they do not include haptikfabriken support). Either use cmake-gui to configure and generate e.g. a visual studio project, or open and configure it directly in QtCreator. I prefer the latter, since I like this cross-platform IDE, even for non-qt projects.

The example in the header picture with the teapot is 13-primitives, shown above in QtCreator. As an example, here is how to initialize a haptic device and connect it with a “tool” that handles the collision detection and response using the algorithm described by Ruspini et al (in Chai3d it is called finger proxy).

// create a haptic device handler handler = new cHapticDeviceHandler(); // get access to the first available haptic device handler->getDevice(hapticDevice, 0); // retrieve information about the current haptic device cHapticDeviceInfo hapticDeviceInfo = hapticDevice->getSpecifications(); // if the haptic devices carries a gripper, enable it to behave like a user switch hapticDevice->setEnableGripperUserSwitch(true); // create a tool (cursor) and insert into the world tool = new cToolCursor(world); world->addChild(tool); // connect the haptic device to the tool tool->setHapticDevice(hapticDevice); // map the physical workspace of the haptic device to a larger virtual workspace. tool->setWorkspaceRadius(1.0); // define the radius of the tool (sphere) double toolRadius = 0.05; // define a radius for the tool tool->setRadius(toolRadius);

The actual haptic rendering is explicitly called from a user-initialized haptic thread, running at 1000+ times per second, making it easy to follow how it actually is done, if you step into the code:

// update position and orientation of tool tool->updateFromDevice(); // compute interaction forces tool->computeInteractionForces(); // send forces to haptic device tool->applyToDevice();

Another example that is built using Chai3D is the demo application shown at EuroHaptics. In addition it uses Bullet for physics simulation, for which there is a module within Chai3D.